A Guide to the Implementation and Modification of the Linux Protocol Stack

Glenn Herrin

TR 00-04

May 31, 2000

Abstract

This document is a guide to understanding how the Linux kernel (version 2.2.14 specifically) implements networking protocols, focused primarily on the Internet Protocol (IP). It is intended as a complete reference for experimenters with overviews, walk-throughs, source code explanations, and examples. The first part contains an in-depth examination of the code, data structures, and functionality involved with networking. There are chapters on initialization, connections and sockets, and receiving, transmitting, and forwarding packets. The second part contains detailed instructions for modifiying the kernel source code and installing new modules. There are chapters on kernel installation, modules, the proc file system, and a complete example.

Contents

Contents

Chapter 1

1. Introduction

This is version 1.0 of this document, dated May 31, 2000, referencing the Linux kernel version 2.2.14.

1.1. Background

Linux is becoming more and more popular as an alternative operating system. Since it is freely available to everyone as part of the open source movement, literally thousands of programmers are constantly working on the code to implement new features, improve existing ones, and fix bugs and inefficiencies in the code. There are many sources for learning more about Linux, from the source code itself (downloadable from the Internet) to books to "HOW-TOs" and message boards maintained on many different subjects.

This document is an effort to bring together many of these sources into one coherent reference on and guide to modifying the networking code within the Linux kernel. It presents the internal workings on four levels: a general overview, more specific examinations of network activities, detailed function walk-throughs, and references to the actual code and data structures. It is designed to provide as much or as little detail as the reader desires. This guide was written specifically about the Linux 2.2.14 kernel (which has already been superseded by 2.2.15) and many of the examples come from the Red Hat 6.1 distribution; hopefully the information provided is general enough that it will still apply across distributions and new kernels. It also focuses almost exclusively on TCP/UDP, IP, and Ethernet - which are the most common but by no means the only networking protocols available for Linux platforms.

As a reference for kernel programmers, this document includes information and pointers on editing and recompiling the kernel, writing and installing modules, and working with the /proc file system. It also presents an example of a program that drops packets for a selected host, along with analysis of the results. Between the descriptions and the examples, this should answer most questions about how Linux performs networking operations and how you can modify it to suit your own purposes.

This project began in a Computer Science Department networking lab at the University of New Hampshire as an effort to institute changes in the Linux kernel to experiment with different routing algorithms. It quickly became apparent that blindly hacking the kernel was not a good idea, so this document was born as a research record and a reference for future programmers. Finally it became large enough (and hopefully useful enough) that we decided to generalize it, formalize it, and release it for public consumption.

As a final note, Linux is an ever-changing system and truly mastering it, if such a thing is even possible, would take far more time than has been spent putting this reference together. If you notice any misstatements, omissions, glaring errors, or even typos ![]() within this document, please contact the person who is currently maintaining it. The goal of this project has been to create a freely available and useful reference for Linux programmers.

within this document, please contact the person who is currently maintaining it. The goal of this project has been to create a freely available and useful reference for Linux programmers.

1.2. Document Conventions

It is assumed that the reader understands the C programming language and is acquainted with common network protocols. This is not vital for the more general information but the details within this document are intended for experienced programmers and may be incomprehensible to casual Linux users.

Almost all of the code presented requires superuser access to implement. Some of the examples can create security holes where none previously existed; programmers should be careful to restore their systems to a normal state after experimenting with the kernel.

File references and program names are written in a slanted font.

Code, command line entries, and machine names are written in a typewriter font.

Generic entries or variables (such as an output filename) and comments are written in an italic font.

1.3. Sample Network Example

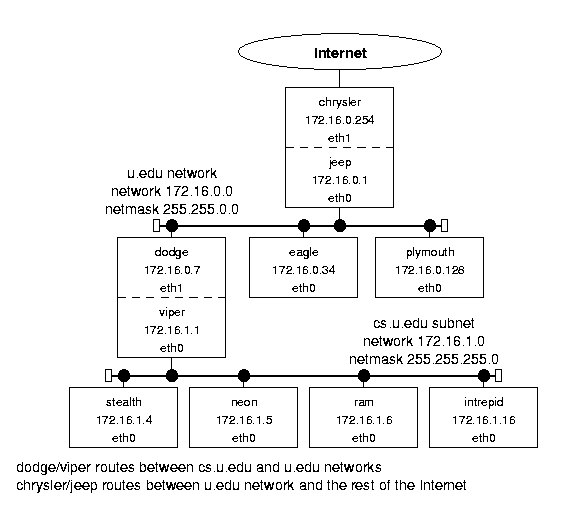

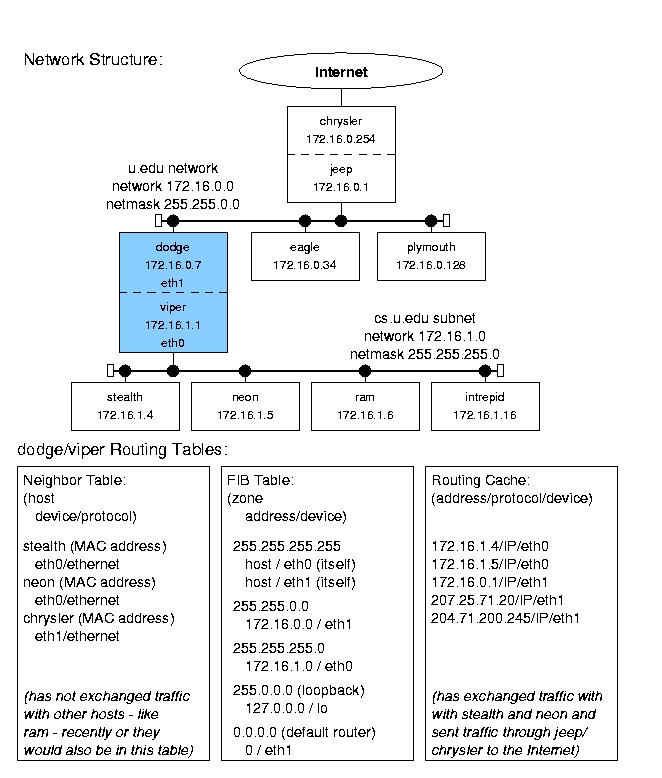

There are numerous examples in this document that help clarify the presented material. For the sake of consistency and familiarity, most of them reference the sample network shown in Figure 1.1.

|

Figure 1.1: Sample network structure. |

This network represents the computer system at a fictional unnamed University (U!). It has a router connected to the Internet at large (chrysler). That machine is connected (through the jeep interface) to the campus-wide network, u.edu, consisting of computers named for Chrysler owned car companies (dodge, eagle, etc.). There is also a LAN subnet for the computer science department, cs.u.edu, whose hosts are named after Dodge vehicle models (stealth, neon, etc.). They are connected to the campus network by the dodge/viper computer. Both the u.edu and cs.u.edu networks use Ethernet hardware and protocols.

This is obviously not a real network. The IP addresses are all taken from the block reserved for class B private networks (that are not guaranteed to be unique). Most real class B networks would have many more computers, and a network with only eight computers would probably not have a subnet. The connection to the Internet (through chrysler) would usually be via a T1 or T3 line, and that router would probably be a "real" router (i.e. a Cisco Systems hardware router) rather than a computer with two network cards. However, this example is realistic enough to serve its purpose: to illustrate the the Linux network implementation and the interactions between hosts, subnets, and networks.

1.4. Copyright, License, and Disclaimer

Copyright (c) 2000 by Glenn Herrin. This document may be freely reproduced in whole or in part provided credit is given to the author with a line similar to the following:

Copied from Linux IP Networking, available at http://original.source/location.

(The visibility of the credit should be proportional to the amount of the document reproduced!) Commercial redistribution is permitted and encouraged. All modifications of this document, including translations, anthologies, and partial documents, must meet the following requirements:

- Modified versions must be labeled as such.

- The person making the modifications must be identified.

- Acknowledgement of the original author must be retained.

- The location of the original unmodified document be identified.

- The original author's name may not be used to assert or imply endorsement of the resulting document without the original author's permission.

Please note any modifications including deletions.

This is a variation (changes are intentional) of the Linux Documentation Project (LDP) License available at:

This document is not currently part of the LDP, but it may be submitted in the future.

This document is distributed in the hope that it will be useful but (of course) without any given or implied warranty of fitness for any purpose whatsoever. Use it at your own risk.

1.5. Acknowledgements

I wrote this document as part of my Master's project for the Computer Science Department of the University of New Hampshire. I would like to thank Professor Pilar de la Torre for setting up the project and Professor Radim Bartos for being both a sponsor and my advisor - giving me numerous pointers, much encouragement, and a set of computers on which to experiment. I would also like to credit the United States Army, which has been my home for 11 years and paid for my attendance at UNH.

Glenn Herrin

Major, United States Army

Primary Documenter and Researcher, Version 1.0

<gherrin@cs.unh.edu>

Chapter 2

2. Message Traffic Overview

This chapter presents an overview of the entire Linux messaging system. It provides a discussion of configurations, introduces the data structures involved, and describes the basics of IP routing.

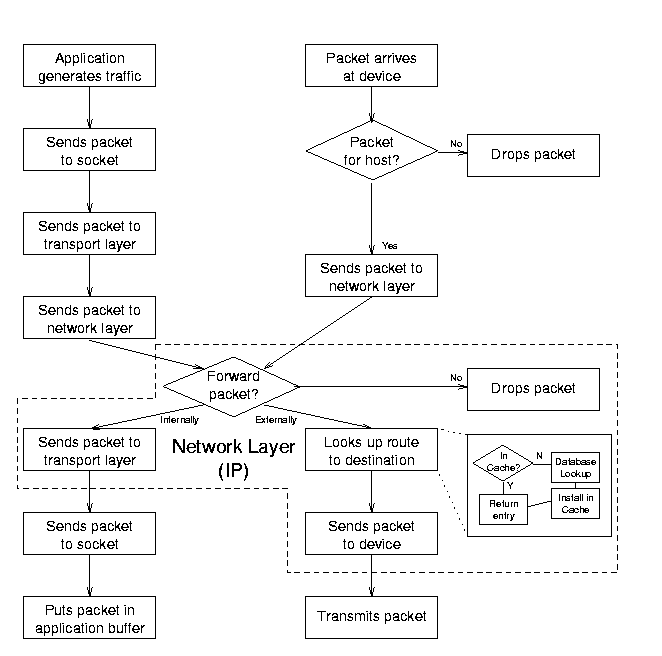

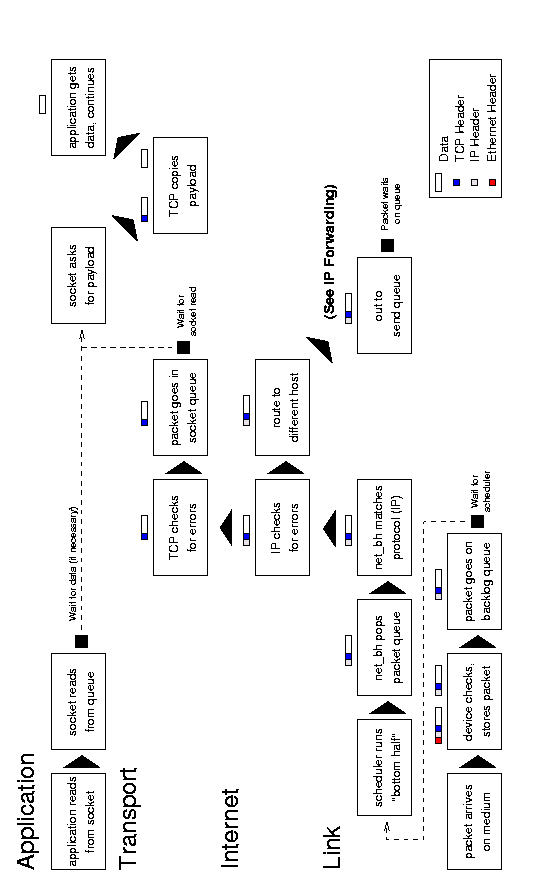

2.1. The Network Traffic Path

The Internet Protocol (IP) is the heart of the Linux messaging system. While Linux (more or less) strictly adheres to the layering concept - and it is possible to use a different protocol (like ATM) - IP is almost always the nexus through which packets flow. The IP implementation of the network layer performs routing and forwarding as well as encapsulating data. See Figure 2.1 for a simplified diagram of how network packets move through the Linux kernel.

|

Figure 2.1: Abstraction of the Linux message traffic path. |

When an application generates traffic, it sends packets through sockets to a transport layer (TCP or UDP) and then on to the network layer (IP). In the IP layer, the kernel looks up the route to the host in either the routing cache or its Forwarding Information Base (FIB). If the packet is for another computer, the kernel addresses it and then sends it to a link layer output interface (typically an Ethernet device) which ultimately sends the packet out over the physical medium.

When a packet arrives over the medium, the input interface receives it and checks to see if the packet is indeed for the host computer. If so, it sends the packet up to the IP layer, which looks up the route to the packet's destination. If the packet has to be forwarded to another computer, the IP layer sends it back down to an output interface. If the packet is for an application, it sends it up through the transport layer and sockets for the application to read when it is ready.

Along the way, each socket and protocol performs various checks and formatting functions, detailed in later chapters. The entire process is implemented with references and jump tables that isolate each protocol, most of which are set up during initialization when the computer boots. See Chapter 3 for details of the initialization process.

2.2. The Protocol Stack

Network devices form the bottom layer of the protocol stack; they use a link layer protocol (usually Ethernet) to communicate with other devices to send and receive traffic. Input interfaces copy packets from a medium, perform some error checks, and then forward them to the network layer. Output interfaces receive packets from the network layer, perform some error checks, and then send them out over the medium.

IP is the standard network layer protocol. It checks incoming packets to see if they are for the host computer or if they need to be forwarded. It defragments packets if necessary and delivers them to the transport protocols. It maintains a database of routes for outgoing packets; it addresses and fragments them if necessary before sending them down to the link layer.

TCP and UDP are the most common transport layer protocols. UDP simply provides a framework for addressing packets to ports within a computer, while TCP allows more complex connection based operations, including recovery mechanisms for packet loss and traffic management implementations. Either one copies the packet's payload between user and kernel space. However, both are just part of the intermediate layer between the applications and the network.

IP Specific INET Sockets are the data elements and implementations of generic sockets. They have associated queues and code that executes socket operations such as reading, writing, and making connections. They act as the intermediary between an application's generic socket and the transport layer protocol.

Generic BSD Sockets are more abstract structures that contain INET sockets. Applications read from and write to BSD sockets; the BSD sockets translate the operations into INET socket operations. See Chapter 4 for more on sockets.

Applications, run in user space, form the top level of the protocol stack; they can be as simple as two-way chat connection or as complex as the Routing Information Protocol (RIP - see Chapter 9).

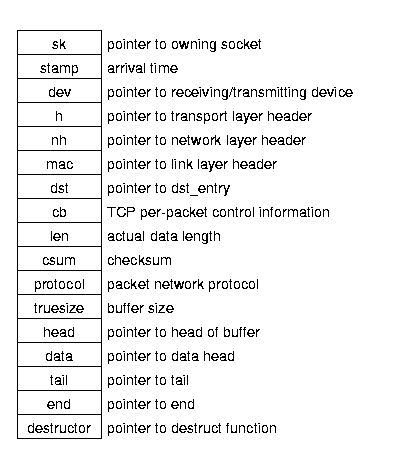

2.3. Packet Structure

The key to maintaining the strict layering of protocols without wasting time copying parameters and payloads back and forth is the common packet data structure (a socket buffer, or sk_buff - Figure 2.2). Throughout all of the various function calls as the data makes it way through the protocols, the payload data is copied only twice; once from user to kernel space and once from kernel space to output medium (for an outbound packet).

|

Figure 2.2: Packet (sk_buff) structure. |

This structure contains pointers to all of the information about a packet - its socket, device, route, data locations, etc. Transport protocols create these packet structures from output buffers, while device drivers create them for incoming data. Each layer then fills in the information that it needs as it processes the packet. All of the protocols - transport (TCP/UDP), internet (IP), and link level (Ethernet) - use the same socket buffer.

2.4. Internet Routing

The IP layer handles routing between computers. It keeps two data structures; a Forwarding Information Base (FIB) that keeps track of all of the details for every known route, and a faster routing cache for destinations that are currently in use. (There is also a third structure - the neighbor table - that keeps track of computers that are physically connected to a host.)

The FIB is the primary routing reference; it contains up to 32 zones (one for each bit in an IP address) and entries for every known destination. Each zone contains entries for networks or hosts that can be uniquely identified by a certain number of bits - a network with a netmask of 255.0.0.0 has 8 significant bits and would be in zone 8, while a network with a netmask of 255.255.255.0 has 24 significant bits and would be in zone 24. When IP needs a route, it begins with the most specific zones and searches the entire table until it finds a match (there should always be at least one default entry). The file /proc/net/route has the contents of the FIB.

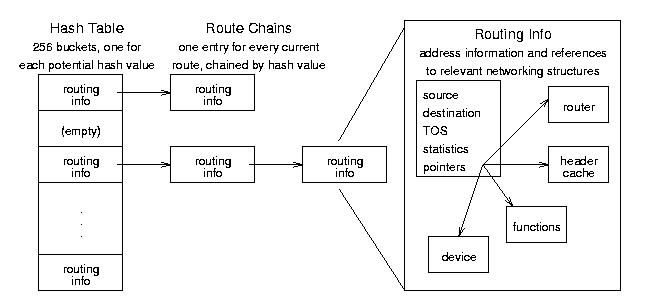

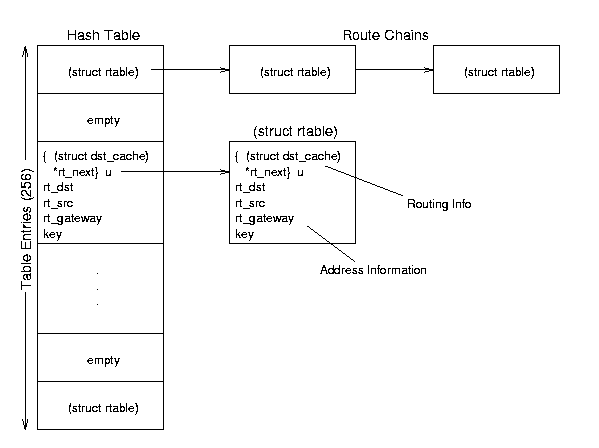

The routing cache is a hash table that IP uses to actually route packets. It contains up to 256 chains of current routing entries, with each entry's position determined by a hash function. When a host needs to send a packet, IP looks for an entry in the routing cache. If there is none, it finds the appropriate route in the FIB and inserts a new entry into the cache. (This entry is what the various protocols use to route, not the FIB entry.) The entries remain in the cache as long as they are being used; if there is no traffic for a destination, the entry times out and IP deletes it. The file /proc/net/rt_cache has the contents of the routing cache.

These tables perform all the routing on a normal system. Even other protocols (such as RIP) use the same structures; they just modify the existing tables within the kernel using the ioctl() function. See Chapter 8 for routing details.

Chapter 3

3. Network Initialization

This chapter presents network initialization on startup. It provides an overview of what happens when the Linux operating system boots, shows how the kernel and supporting programs ifconfig and route establish network links, shows the differences between several example configurations, and summarizes the implementation code within the kernel and network programs.

3.1. Overview

Linux initializes routing tables on startup only if a computer is configured for networking. (Almost all Linux machines do implement networking, even stand-alone machines, if only to use the loopback device.) When the kernel finishes loading itself, it runs a set of common but system specific utility programs and reads configuration files, several of which establish the computer's networking capabilities. These determine its own address, initialize its interfaces (such as Ethernet cards), and add critical and known static routes (such as one to a router that connects it with the rest of the Internet). If the computer is itself a router, it may also execute a program that allows it to update its routing tables dynamically (but this is NOT run on most hosts).

The entire configuration process can be static or dynamic. If addresses and names never (or infrequently) change, the system administrator must define options and variables in files when setting up the system. In a more mutable environment, a host will use a protocol like the Dynamic Hardware Configuration Protocol (DHCP) to ask for an address, router, and DNS server information with which to configure itself when it boots. (In fact, in either case, the administrator will almost always use a GUI interface - like Red Hat's Control Panel - which automatically writes the configuration files shown below.)

An important point to note is that while most computers running Linux start up the same way, the programs and their locations are not by any means standardized; they may vary widely depending on distribution, security concerns, or whim of the system administrator. This chapter presents as generic a description as possible but assumes a Red Hat Linux 6.1 distribution and a generally static network environment.

3.2. Startup

When Linux boots as an operating system, it loads its image from the disk into memory, unpacks it, and establishes itself by installing the file systems and memory management and other key systems. As the kernel's last (initialization) task, it executes the init program. This program reads a configuration file (/etc/inittab) which directs it to execute a startup script (found in /etc/rc.d on Red Hat distributions). This in turn executes more scripts, eventually including the network script (/etc/rc.d/init.d/network). (See Section 3.3 for examples of the script and file interactions.)

3.2.1. The Network Initialization Script

The network initialization script sets environment variables to identify the host computer and establish whether or not the computer will use a network. Depending on the values given, the network script turns on (or off) IP forwarding and IP fragmentation. It also establishes the default router for all network traffic and the device to use to send such traffic. Finally, it brings up any network devices using the ifconfig and route programs. (In a dynamic environment, it would query the DHCP server for its network information instead of reading its own files.)

The script(s) involved in establishing networking can be very straightforward; it is entirely possible to have one big script that simply executes a series of commands that will set up a single machine properly. However, most Linux distributions come with a large number of generic scripts that work for a wide variety of machine setups. This leaves a lot of indirection and conditional execution in the scripts, but actually makes setting up any one machine much easier. For example, on Red Hat distributions, the /etc/rc.d/init.d/network script runs several other scripts and sets up variables like interfaces_boot to keep track of which /etc/sysconfig/network-scripts/ifup scripts to run. Tracing the process manually is very complicated, but simple modifications of only two configuration files (putting the proper names and IP addresses in the /etc/sysconfig/network and /etc/sysconfig/network-scripts/ifcfg-eth0 files) sets up the entire system properly (and a GUI makes the process even simpler).

When the network script finishes, the FIB contains the specified routes to given hosts or networks and the routing cache and neighbor tables are empty. When traffic begins to flow, the kernel will update the neighbor table and routing cache as part of the normal network operations. (Network traffic may begin during initialization if a host is dynamically configured or consults a network clock, for example.)

3.2.2. ifconfig

The ifconfig program configures interface devices for use. (This program, while very widely used, is not part of the kernel.) It provides each device with its (IP) address, netmask, and broadcast address. The device in turn will run its own initialization functions (to set any static variables) and register its interrupts and service routines with the kernel. The ifconfig commands in the network script look like this:

ifconfig ${DEVICE} ${IPADDR} netmask ${NMASK} broadcast ${BCAST} (where the variables are either written directly in the script or are defined in other scripts).

The ifconfig program can also provide information about currently configured network devices (calling with no arguments displays all the active interfaces; calling with the -a option displays all interfaces, active or not):

ifconfig

This provides all the information available about each working interface; addresses, status, packet statistics, and operating system specifics. Usually there will be at least two interfaces - a network card and the loopback device. The information for each interface looks like this (this is the viper interface):

eth0 Link encap:Ethernet HWaddr 00:C1:4E:7D:9E:25

inet addr:172.16.1.1 Bcast:172.16.1.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:389016 errors:16534 dropped:0 overruns:0 frame:24522

TX packets:400845 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:100

Interrupt:11 Base address:0xcc00A superuser can use ifconfig to change interface settings from the command line; here is the syntax:

ifconfig interface [aftype] options | address ...

...and some of the more useful calls:

ifconfig eth0 down - shut down eth0

ifconfig eth1 up - activate eth1

ifconfig eth0 arp - enable ARP on eth0

ifconfig eth0 -arp - disable ARP on eth0

ifconfig eth0 netmask 255.255.255.0 - set the eth0 netmask

ifconfig lo mtu 2000 - set the loopback maximum transfer unit

ifconfig eth1 172.16.0.7 - set the eth1 IP address Note that modifying an interface configuration can indirectly change the routing tables. For example, changing the netmask may make some routes moot (including the default or even the route to the host itself) and the kernel will delete them.

3.2.3. route

The route program simply adds predefined routes for interface devices to the Forwarding Information Base (FIB). This is not part of the kernel, either; it is a user program whose command in the script looks like this:

route add -net ${NETWORK} netmask ${NMASK} dev ${DEVICE} -or-

route add -host ${IPADDR} ${DEVICE}(where the variables are again spelled out or defined in other scripts).

The route program can also delete routes (if run with the del option) or provide information about the routes that are currently defined (if run with no options):

route

This displays the Kernel IP routing table (the FIB, not the routing cache). For example (the stealth computer):

Kernel IP routing table Destination Gateway Genmask Flags Metric Ref Use Iface 172.16.1.4 * 255.255.255.255 UH 0 0 0 eth0 172.16.1.0 * 255.255.255.0 U 0 0 0 eth0 127.0.0.0 * 255.0.0.0 U 0 0 0 lo default viper.u.edu 0.0.0.0 UG 0 0 0 eth0

A superuser can use route to add and delete IP routes from the command line; here is the basic syntax:

route add [-net|-host] target [option arg]

route del [-net|-host] target [option arg] ... and some useful examples:

route add -host 127.16.1.0 eth1 - adds a route to a host

route add -net 172.16.1.0 netmask 255.255.255.0 eth0 - adds a network

route add default gw jeep - sets the default route through jeep

(Note that a route to jeep must already be set up)

route del -host 172.16.1.16 - deletes entry for host 172.16.1.16

3.2.4. Dynamic Routing Programs

If the computer is a router, the network script will run a routing program like routed or gated. Since most computers are always on the same hard-wired network with the same set of addresses and limited routing options, most computers do not run one of these programs. (If an Ethernet cable is cut, traffic simply will not flow; there is no need to try to reroute or adjust routing tables.) See Chapter 9 for more information about routed.

3.3. Examples

The following are examples of files for systems set up in three different ways and explanations of how they work. Typically every computer will execute a network script that reads configuration files, even if the files tell the computer not to implement any networking.

3.3.1. Home Computer

These files would be on a computer that is not permanently connected to a network, but has a modem for ppp access. (This section does not reference a computer from the general example.)

This is the first file the network script will read; it sets several environment variables. The first two variables set the computer to run networking programs (even though it is not on a network) but not to forward packets (since it has nowhere to send them). The last two variables are generic entries.

/etc/sysconfig/network

NETWORKING=yes

FORWARD_IPV4=false

HOSTNAME=localhost.localdomain

GATEWAY= After setting these variables, the network script will decide that it needs to configure at least one network device in order to be part of a network. The next file (which is almost exactly the same on all Linux computers) sets up environment variables for the loopback device. It names it and gives it its (standard) IP address, network mask, and broadcast address as well as any other device specific variables. (The ONBOOT variable is a flag for the script program that tells it to configure this device when it boots.) Most computers, even those that will never connect to the Internet, install the loopback device for inter-process communication.

/etc/sysconfig/network-scripts/ifcfg-lo

DEVICE=lo

IPADDR=127.0.0.1

NMASK=255.0.0.0

NETWORK=127.0.0.0

BCAST=127.255.255.255

ONBOOT=yes

NAME=loopback

BOOTPROTO=none After setting these variables, the script will run the ifconfig program and stop, since there is nothing else to do at the moment. However, when the ppp program connects to an Internet Service Provider, it will establish a ppp device and addressing and routes based on the dynamic values assigned by the ISP. The DNS server and other connection information should be in an ifcfg-ppp file.

3.3.2. Host Computer on a LAN

These files would be on a computer that is connected to a LAN; it has one Ethernet card that should come up whenever the computer boots. These files reflect entries on the stealth computer from the general example.

This is the first file the network script will read; again the first variables simply determine that the computer will do networking but that it will not forward packets. The last four variables identify the computer and its link to the rest of the Internet (everything that is not on the LAN).

/etc/sysconfig/network

NETWORKING=yes

FORWARD_IPV4=false

HOSTNAME=stealth.cs.u.edu

DOMAINNAME=cs.u.edu

GATEWAY=172.16.1.1

GATEWAYDEV=eth0After setting these variables, the network script will configure the network devices. This file sets up environment variables for the Ethernet card. It names the device and gives it its IP address, network mask, and broadcast address as well as any other device specific variables. This kind of computer would also have a loopback configuration file exactly like the one for a non-networked computer.

/etc/sysconfig/network-scripts/ifcfg-eth0

DEVICE=eth0

IPADDR=172.16.1.1

NMASK=255.255.255.0

NETWORK=172.16.1.0

BCAST=172.16.1.255

ONBOOT=yes

BOOTPROTO=static /etc/sysconfig/network-scripts/ifcfg-eth1

DEVICE=eth1

IPADDR=172.16.1.4

NMASK=255.255.255.0

NETWORK=172.16.1.0

BCAST=172.16.1.255

ONBOOT=yes

BOOTPROTO=noneAfter setting these variables, the network script will run the ifconfig program to start the device. Finally, the script will run the route program to add the default route (GATEWAY) and any other specified routes (found in the /etc/sysconfig/static-routes file, if any). In this case only the default route is specified, since all traffic either stays on the LAN (where the computer will use ARP to find other hosts) or goes through the router to get to the outside world.

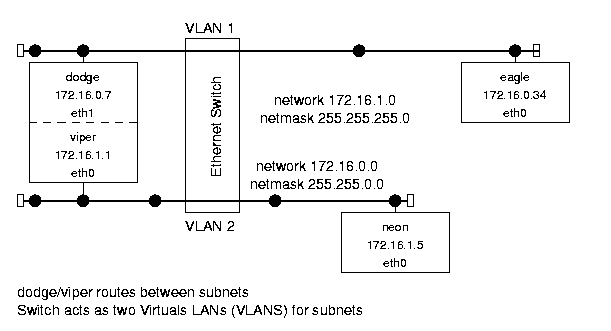

3.3.3. Network Routing Computer

These files would be on a computer that serves as a router between two networks; it has two Ethernet cards, one for each network. One card is on a large network (WAN) connected to the Internet (through yet another router) while the other is on a subnetwork (LAN). Computers on the LAN that need to communicate with the rest of the Internet send traffic through this computer (and vice versa). These files reflect entries on the dodge/viper computer from the general example.

This is the first file the network script will read; it sets several environment variables. The first two simply determine that the computer will do networking (since it is on a network) and that this one will forward packets (from one network to the other). IP Forwarding is built into most kernels, but it is not active unless there is a 1 "written" to the /proc/net/ipv4/ip_forward file. (One of the network scripts performs an echo 1 > /proc/net/ipv4/ip_forward if FORWARD_IPV4 is true.) The last four variables identify the computer and its link to the rest of the Internet (everything that is not on one of its own networks).

/etc/sysconfig/network

NETWORKING=yes

FORWARD_IPV4=true

HOSTNAME=dodge.u.edu

DOMAINNAME=u.edu

GATEWAY=172.16.0.1

GATEWAYDEV=eth1After setting these variables, the network script will configure the network devices. These files set up environment variables for two Ethernet cards. They name the devices and give them their IP addresses, network masks, and broadcast addresses. (Note that the BOOTPROTO variable remains defined for the second card.) Again, this computer would have the standard loopback configuration file.

/etc/sysconfig/network-scripts/ifcfg-eth0

DEVICE=eth0

IPADDR=172.16.0.7

NMASK=255.255.0.0

NETWORK=172.16.0.0

BCAST=172.16.255.255

ONBOOT=yes /etc/sysconfig/network-scripts/ifcfg-eth1

DEVICE=eth1

IPADDR=172.16.0.7

NMASK=255.255.0.0

NETWORK=172.16.0.0

BCAST=172.16.255.255

ONBOOT=yes After setting these variables, the network script will run the ifconfig program to start each device. Finally, the script will run the route program to add the default route (GATEWAY) and any other specified routes (found in the /etc/sysconfig/static-routes file, if any). In this case again, the default route is the only specified route, since all traffic will go on the network indicated by the network masks or through the default router to reach the rest of the Internet.

3.4. Linux and Network Program Functions

The following are alphabetic lists of the Linux kernel and network program functions that are most important to initialization, where they are in the source code, and what they do. The SOURCES directory shown represents the directory that contains the source code for the given network file. The executable files should come with any Linux distrbution, but the source code probably does not.

These sources are available as a package separate from the kernel source (Red Hat Linux uses the rpm package manager). The code below is from the net-tools-1.53-1 source code package, 29 August 1999. The packages are available from the www.redhat.com/apps/download web page. Once downloaded, root can install the package with the following commands (starting from the directory with the package):

rpm -i net-tools-1.53-1.src.rpm

cd /usr/src/redhat/SOURCES

tar xzf net-tools-1.53.tar.gz This creates a /usr/src/redhat/SOURCES/net-tools-1.53 directory and fills it with the source code for the ifconfig and route programs (among others). This process should be similar (but is undoubtably not exactly the same) for other Linux distributions.

3.4.1. ifconfig

devinet_ioctl() - net/ipv4/devinet.c (398)

creates an info request (ifreq) structure and copies data from

user to kernel space

if it is an INET level request or action, executes it

if it is a device request or action, calls a device function

copies ifreq back into user memory

returns 0 for success

>>> ifconfig main() - SOURCES/ifconfig.c (478)

opens a socket (only for use with ioctl function)

searches command line arguments for options

calls if_print() if there were no arguments or the only argument

is an interface name

loops through remaining arguments, setting or clearing flags or

calling ioctl() to set variables for the interface

if_fetch() - SOURCES/lib/interface.c (338)

fills in an interface structure with multiple calls to ioctl() for

flags, hardware address, metric, MTU, map, and address information

if_print() - SOURCES/ifconfig.c (121)

calls ife_print() for given (or all) interface(s)

(calls if_readlist() to fill structure list if necessary and

then displays information about each interface)

if_readlist() - SOURCES/lib/interface.c (261)

opens /proc/net/dev and parses data into interface structures

calls add_interface() for each device to put structures into a list

inet_ioctl() - net/ipv4/af_inet.c (855)

executes a switch based on the command passed

[for ifconfig, calls devinet_ioctl()]

ioctl() -

jumps to appropriate handler routine [= inet_ioctl()]

3.4.2. route

INET_rinput() - SOURCES/lib/inet_sr.c (305)

checks for errors (cannot flush table or modify routing cache)

calls INET_setroute()

INET_rprint() - SOURCES/lib/inet_gr.c (442)

if the FIB flag is set, calls rprint_fib()

(reads, parses, and displays contents of /proc/net/route)

if the CACHE flag is set, calls rprint_cache()

(reads, parses, and displays contents of /proc/net/rt_cache)

INET_setroute() - SOURCE/lib/inet_sr.c (57)

establishes whether route is to a network or a host

checks to see if address is legal

loops through arguments, filling in rtentry structure

checks for netmask conflicts

creates a temporary socket

calls ioctl() with rtentry to add or delete route

closes socket and returns 0

ioctl() -

jumps to appropriate handler routine [= ip_rt_ioctl()]

ip_rt_ioctl() - net/ipv4/fib_frontend.c (246)

converts passed parameters to routing table entry (struct rtentry)

if deleting a route:

calls fib_get_table() to find the appropriate table

calls the table->tb_delete() function to remove it

if adding a route

calls fib_net_table() to find an entry point

calls the table->tb_insert() function to add the entry

returns 0 for success

>>> route main() - SOURCES/route.c (106)

calls initialization routines that set print and edit functions

gets and parses the command line options (acts on some options

directly by setting flags or displaying information)

checks the options (prints a usage message if there is an error)

if there are no options, calls route_info()

if the option is to add, delete, or flush routes,

calls route_edit() with the passed parameters

if the option is invalid, prints a usage message

returns result of

route_edit() - SOURCES/lib/setroute.c (69)

calls get_aftype() to translate address family from text to a pointer

checks for errors (unsupported or nonexistent family)

calls the address family rinput() function [= INET_rinput()]

route_info() - SOURCES/lib/getroute.c (72)

calls get_aftype() to translate address family from text to a pointer

checks for errors (unsupported or nonexistent family)

calls the address family rprint() function [= INET_rprint()]

Chapter 4

4. Connections

This chapter presents the connection process. It provides an overview of the connection process, a description of the socket data structures, an introduction to the routing system, and summarizes the implementation code within the kernel.

4.1. Overview

The simplest form of networking is a connection between two hosts. On each end, an application gets a socket, makes the transport layer connection, and then sends or receives packets. In Linux, a socket is actually composed of two socket structures (one that contains the other). When an application creates a socket, it is initialized but empty. When the socket makes a connection (whether or not this involves traffic with the other end) the IP layer determines the route to the distant host and stores that information in the socket. From that point on, all traffic using that connection uses that route - sent packets will travel through the correct device and the proper routers to the distant host, and received packets will appear in the socket's queue.

4.2. Socket Structures

There are two main socket structures in Linux: general BSD sockets and IP specific INET sockets. They are strongly interrelated; a BSD socket has an INET socket as a data member and an INET socket has a BSD socket as its owner.

BSD sockets are of type struct socket as defined in include/linux/socket.h. BSD socket variables are usually named sock or some variation thereof. This structure has only a few entries, the most important of which are described below.

struct proto_ops *ops - this structure contains pointers to protocol specific functions for implementing general socket behavior. For example, ops- > sendmsg points to the inet_sendmsg() function.

struct inode *inode - this structure points to the file inode that is associated with this socket.

struct sock *sk - this is the INET socket that is associated with this socket.

INET sockets are of type struct sock as defined in include/net/sock.h. INET socket variables are usually named sk or some variation thereof. This structure has many entries related to a wide variety of uses; there are many hacks and configuration dependent fields. The most important data members are described below:

struct sock *next, *pprev - all sockets are linked by various protocols, so these pointers allow the protocols to traverse them.

struct dst_entry *dst_cache - this is a pointer to the route to the socket's other side (the destination for sent packets).

struct sk_buff_head receive_queue - this is the head of the receive queue.

struct sk_buff_head write_queue - this is the head of the send queue.

__u32 saddr - the (Internet) source address for this socket.

struct sk_buff_head back_log,error_queue - extra queues for a backlog of packets (not to be confused with the main backlog queue) and erroneous packets for this socket.

struct proto *prot - this structure contains pointers to transport layer protocol specific functions. For example, prot- > recvmsg may point to the tcp_v4_recvmsg() function.

union struct tcp_op af_tcp; tp_pinfo - TCP options for this socket.

struct socket *sock - the parent BSD socket.

Note that there are many more fields within this structure; these are only the most critical and non-obvious. The rest are either not very important or have self-explanatory names (e.g., ip_ttl is the IP Time-To-Live counter).

4.3. Sockets and Routing

Sockets only go through the routing lookup process once for each destination (at connection time). Because Linux sockets are so closely related to IP, they contain routes to the other end of a connection (in the sock- > sk- > dst_cache variable). The transport protocols call the ip_route_connect() function to determine the route from host to host during the connection process; after that, the route is presumed not to change (though the path pointed to by the dst_cache may indeed change). The socket does not need to do continuous routing table look-ups for each packet it sends or receives; it only tries again if something unexpected happens (such as a neighboring computer going down). This is the benefit of using connections.

4.4. Connection Processes

4.4.1. Establishing Connections

Application programs establish sockets with a series of system calls that look up the distant address, establish a socket, and then connect to the machine on the other end.

/* look up host */

server = gethostbyname(SERVER_NAME);

/* get socket */

sockfd = socket(AF_INET, SOCK_STREAM, 0);

/* set up address */

address.sin_family = AF_INET;

address.sin_port = htons(PORT_NUM);

memcpy(&address.sin_addr,server->h_addr,server->h_length);

/* connect to server */

connect(sockfd, &address, sizeof(address));The gethostbyname() function simply looks up a host (such as "viper.cs.u.edu") and returns a structure that contains an Internet (IP) address. This has very little to do with routing (only inasmuch as the host may have to query the network to look up an address) and is simply a translation from a human readable form (text) to a computer compatible one (an unsigned 4 byte integer).

The socket() call is more interesting. It creates a socket object, with the appropriate data type (a sock for INET sockets) and initializes it. The socket contains inode information and protocol specific pointers for various network functions. It also establishes defaults for queues (incoming, outgoing, error, and backlog), a dummy header info for TCP sockets, and various state information.

Finally, the connect() call goes to the protocol dependent connection routine (e.g., tcp_v4_connect() or udp_connect()). UDP simply establishes a route to the destination (since there is no virtual connection). TCP establishes the route and then begins the TCP connection process, sending a packet with appropriate connection and window flags set.

4.4.2. Socket Call Walk-Through

- Check for errors in call

- Create (allocate memory for) socket object

- Put socket into INODE list

- Establish pointers to protocol functions (INET)

- Store values for socket type and protocol family

- Set socket state to closed

- Initialize packet queues

4.4.3. Connect Call Walk-Through

- Check for errors

- Determine route to destination:

- Check routing table for existing entry (return that if one exists)

- Look up destination in FIB

- Build new routing table entry

- Put entry in routing table and return it

- Store pointer to routing entry in socket

- Call protocol specific connection function (e.g., send a TCP connection packet)

- Set socket state to established

4.4.4. Closing Connections

Closing a socket is fairly straightforward. An application calls close() on a socket, which becomes a sock_close() function call. This changes the socket state to disconnecting and calls the data member's (INET socket's) release function. The INET socket in turn cleans up its queues and calls the transport protocol's close function, tcp_v4_close() or udp_close(). These perform any necessary actions (the TCP functions may send out packets to end the TCP connection) and then clean up any data structures they have remaining. Note that no changes are made for routing; the (now-empty) socket no longer has a reference to the destination and the entry in the routing cache will remain until it is freed for lack of use.

4.4.5. Close Walk-Through

- Check for errors (does the socket exist?)

- Change the socket state to disconnecting to prevent further use

- Do any protocol closing actions (e.g., send a TCP packet with the FIN bit set)

- Free memory for socket data structures (TCP/UDP and INET)

- Remove socket from INODE list

4.5. Linux Functions

The following is an alphabetic list of the Linux kernel functions that are most important to connections, where they are in the source code, and what they do. To follow function calls for creating a socket, begin with sock_create(). To follow function calls for closing a socket, begin with sock_close().

destroy_sock - net/ipv4/af_inet.c (195)

deletes any timers

calls any protocols specific destroy functions

frees the socket's queues

frees the socket structure itself

fib_lookup() - include/net/ip_fib.h (153)

calls tb_lookup() [= fn_hash_lookup()] on local and main tables

returns route or unreachable error

fn_hash_lookup() - net/ipv4/fib_hash.c (261)

looks up and returns route to an address

inet_create() - net/ipv4/af_inet.c (326)

calls sk_alloc() to get memory for sock

initializes sock structure:

sets proto structure to appropriate values for TCP or UDP

calls sock_init_data()

sets family,protocol,etc. variables

calls the protocol init function (if any)

inet_release() - net/ipv4/af_inet.c (463)

changes socket state to disconnecting

calls ip_mc_drop_socket to leave multicast group (if necessary)

sets owning socket's data member to NULL

calls sk->prot->close() [=TCP/UDP_close()]

ip_route_connect() - include/net/route.h (140)

calls ip_route_output() to get a destination address

returns if the call works or generates an error

otherwise clears the route pointer and try again

ip_route_output() - net/ipv4/route.c (1664)

calculates hash value for address

runs through table (starting at hash) to match addresses and TOS

if there is a match, updates stats and return route entry

else calls ip_route_output_slow()

ip_route_output_slow() - net/ipv4/route.c (1421)

if source address is known, looks up output device

if destination address is unknown, sets up loopback

calls fib_lookup() to find route in FIB

allocates memory new routing table entry

initializes table entry with source, destination, TOS, output device,

flags

calls rt_set_nexthop() to find next destination

returns rt_intern_hash(), which installs route in routing table

rt_intern_hash() - net/ipv4/route.c (526)

loops through rt_hash_table (starting at hash value)

if keys match, put rtable entry in front bucket

else put rtable entry into hash table at hash

>>> sock_close() - net/socket.c (476)

checks if socket exists (could be null)

calls sock_fasync() to remove socket from async list

calls sock_release()

>>> sock_create() - net/socket.c (571)

checks parameters

calls sock_alloc() to get an available inode for the socket and

initialize it

sets socket->type (to SOCK_STREAM, SOCK_DGRAM...)

calls net_family->create() [= inet_create()] to build sock structure

returns established socket

sock_init_data() - net/core/sock.c (1018)

initializes all generic sock values

sock_release() - net/socket.c (309)

changes state to disconnecting

calls sock->ops->release() [= inet_release()]

calls iput() to remove socket from inode list

sys_socket() - net/socket.c (639)

calls sock_create() to get and initialize socket

calls get_fd() to assign an fd to the socket

sets socket->file to fcheck() (pointer to file)

calls sock_release() if anything fails

tcp_close() - net/ipv4/tcp.c (1502)

check for errors

pops and discards all packets off incoming queue

sends messages to destination to close connection (if required)

tcp_connect() - net/ipv4/tcp_output.c (910)

completes connection packet with appropriate bits and window sizes set

puts packet on socket output queue

calls tcp_transmit_skb() to send packet, initiating TCP connection

tcp_v4_connect() - net/ipv4/tcp_ipv4.c (571)

checks for errors

calls ip_route_connect() to find route to destination

creates connection packet

calls tcp_connect() to send packet

udp_close() - net/ipv4/udp.c (954)

calls udp_v4_unhash() to remove socket from socket list

calls destroy_sock()

udp_connect() - net/ipv4/udp.c (900)

calls ip_route_connect() to find route to destination

updates socket with source and destination addresses and ports

changes socket state to established

saves the destination route in sock->dst_cache

Chapter 5

5. Sending Messages

This chapter presents the sending side of message trafficking. It provides an overview of the process, examines the layers packets travel through, details the actions of each layer, and summarizes the implementation code within the kernel.

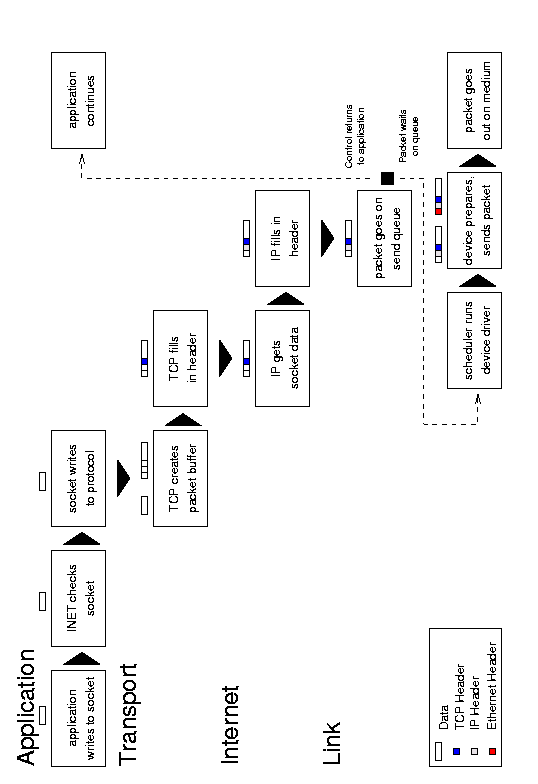

5.1. Overview

|

Figure 5.1: Message transmission. |

An outgoing message begins with an application system call to write data to a socket. The socket examines its own connection type and calls the appropriate send routine (typically INET). The send function verifies the status of the socket, examines its protocol type, and sends the data on to the transport layer routine (such as TCP or UDP). This protocol creates a new buffer for the outgoing packet (a socket buffer, or struct sk_buff skb), copies the data from the application buffer, and fills in its header information (such as port number, options, and checksum) before passing the new buffer to the network layer (usually IP). The IP send functions fill in more of the buffer with its own protocol headers (such as the IP address, options, and checksum). It may also fragment the packet if required. Next the IP layer passes the packet to the link layer function, which moves the packet onto the sending device's xmit queue and makes sure the device knows that it has traffic to send. Finally, the device (such as a network card) tells the bus to send the packet.

5.2. Sending Walk-Through

5.2.1. Writing to a Socket

- Write data to a socket (application)

- Fill in message header with location of data (socket)

- Check for basic errors - is socket bound to a port? can the socket send messages? is there something wrong with the socket?

- Pass the message header to appropriate transport protocol (INET socket)

5.2.2. Creating a Packet with UDP

- Check for errors - is the data too big? is it a UDP connection?

- Make sure there is a route to the destination (call the IP routing routines if the route is not already established; fail if there is no route)

- Create a UDP header (for the packet)

- Call the IP build and transmit function

5.2.3. Creating a Packet with TCP

- Check connection - is it established? is it open? is the socket working?

- Check for and combine data with partial packets if possible

- Create a packet buffer

- Copy the payload from user space

- Add the packet to the outbound queue

- Build current TCP header into packet (with ACKs, SYN, etc.)

- Call the IP transmit function

5.2.4. Wrapping a Packet in IP

- Create a packet buffer (if necessary - UDP)

- Look up route to destination (if necessary - TCP)

- Fill in the packet IP header

- Copy the transport header and the payload from user space

- Send the packet to the destination route's device output funtion

5.2.5. Transmitting a Packet

- Put the packet on the device output queue

- Wake up the device

- Wait for the scheduler to run the device driver

- Test the medium (device)

- Send the link header

- Tell the bus to transmit the packet over the medium

5.3. Linux Functions

The following is an alphabetic list of the Linux kernel functions that are most important to message traffic, where they are in the source code, and what they do. To follow function calls, begin with sock_write().

dev_queue_xmit() - net/core/dev.c (579)

calls start_bh_atomic()

if device has a queue

calls enqueue() to add packet to queue

calls qdisc_wakeup() [= qdisc_restart()] to wake device

else calls hard_start_xmit()

calls end_bh_atomic()

DEVICE->hard_start_xmit() - device dependent, drivers/net/DEVICE.c

tests to see if medium is open

sends header

tells bus to send packet

updates status

inet_sendmsg() - net/ipv4/af_inet.c (786)

extracts pointer to socket sock

checks socket to make sure it is working

verifies protocol pointer

returns sk->prot[tcp/udp]->sendmsg()

ip_build_xmit - net/ipv4/ip_output.c (604)

calls sock_alloc_send_skb() to establish memory for skb

sets up skb header

calls getfrag() [= udp_getfrag()] to copy buffer from user space

returns rt->u.dst.output() [= dev_queue_xmit()]

ip_queue_xmit() - net/ipv4/ip_output.c (234)

looks up route

builds IP header

fragments if required

adds IP checksum

calls skb->dst->output() [= dev_queue_xmit()]

qdisc_restart() - net/sched/sch_generic.c (50)

pops packet off queue

calls dev->hard_start_xmit()

updates status

if there was an error, requeues packet

sock_sendmsg() - net/socket.c (325)

calls scm_sendmsg() [socket control message]

calls sock->ops[inet]->sendmsg() and destroys scm

>>> sock_write() - net/socket.c (399)

calls socki_lookup() to associate socket with fd/file inode

creates and fills in message header with data size/addresses

returns sock_sendmsg()

tcp_do_sendmsg() - net/ipv4/tcp.c (755)

waits for connection, if necessary

calls skb_tailroom() and adds data to waiting packet if possible

checks window status

calls sock_wmalloc() to get memory for skb

calls csum_and_copy_from_user() to copy packet and do checksum

calls tcp_send_skb()

tcp_send_skb() - net/ipv4/tcp_output.c (160)

calls __skb_queue_tail() to add packet to queue

calls tcp_transmit_skb() if possible

tcp_transmit_skb() - net/ipv4/tcp_output.c (77)

builds TCP header and adds checksum

calls tcp_build_and_update_options()

checks ACKs,SYN

calls tp->af_specific[ip]->queue_xmit()

tcp_v4_sendmsg() - net/ipv4/tcp_ipv4.c (668)

checks for IP address type, opens connection, port addresses

returns tcp_do_sendmsg()

udp_getfrag() - net/ipv4/udp.c (516)

copies and checksums a buffer from user space

udp_sendmsg() - net/ipv4/udp.c (559)

checks length, flags, protocol

sets up UDP header and address info

checks multicast

fills in route

fills in remainder of header

calls ip_build_xmit()

updates UDP status

returns err

Chapter 6

6. Receiving Messages

This chapter presents the receiving side of message trafficking. It provides an overview of the process, examines the layers packets travel through, details the actions of each layer, and summarizes the implementation code within the kernel.

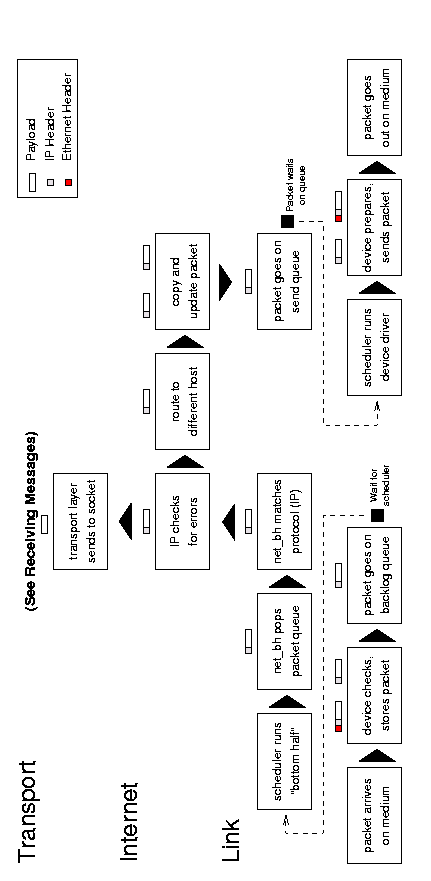

6.1. Overview

|

Figure 6.1: Receiving messages. |

An incoming message begins with an interrupt when the system notifies the device that a message is ready. The device allocates storage space and tells the bus to put the message into that space. It then passes the packet to the link layer, which puts it on the backlog queue, and marks the network flag for the next "bottom-half" run.

The bottom-half is a Linux system that minimizes the amount of work done during an interrupt. Doing a lot of processing during an interrupt is not good precisely because it interrupts a running process; instead, interrupt handlers have a "top-half" and a "bottom-half". When the interrupt arrives, the top-half runs and takes care of any critical operations, such as moving data from a device queue into kernel memory. It then marks a flag that tells the kernel that there is more work to do - when the processor has time - and returns control to the current process. The next time the process scheduler runs, it sees the flag, does the extra work, and only then schedules any normal processes.

When the process scheduler sees that there are networking tasks to do it runs the network bottom-half. This function pops packets off of the backlog queue, matches them to a known protocol (typically IP), and passes them to that protocol's receive function. The IP layer examines the packet for errors and routes it; the packet will go into an outgoing queue (if it is for another host) or up to the transport layer (such as TCP or UDP). This layer again checks for errors, looks up the socket associated with the port specified in the packet, and puts the packet at the end of that socket's receive queue.

Once the packet is in the socket's queue, the socket will wake up the application process that owns it (if necessary). That process may then make or return from a read system call that copies the data from the packet in the queue into its own buffer. (The process may also do nothing for the time being if it was not waiting for the packet, and get the data off the queue when it needs it.)

6.2. Receiving Walk-Through

6.2.1. Reading from a Socket (Part I)

- Try to read data from a socket (application)

- Fill in message header with location of buffer (socket)

- Check for basic errors - is the socket bound to a port? can the socket accept messages? is there something wrong with the socket?

- Pass the message header with to the appropriate transport protocol (INET socket)

- Sleep until there is enough data to read from the socket (TCP/UDP)

6.2.2. Receiving a Packet

- Wake up the receiving device (interrupt)

- Test the medium (device)

- Receive the link header

- Allocate space for the packet

- Tell the bus to put the packet into the buffer

- Put the packet on the backlog queue

- Set the flag to run the network bottom half when possible

- Return control to the current process

6.2.3. Running the Network "Bottom Half"

- Run the network bottom half (scheduler)

- Send any packets that are waiting to prevent interrupts (bottom half)

- Loop through all packets in the backlog queue and pass the packet up to its Internet reception protocol - IP

- Flush the sending queue again

- Exit the bottom half

6.2.4. Unwrapping a Packet in IP

- Check packet for errors - too short? too long? invalid version? checksum error?

- Defragment the packet if necessary

- Get the route for the packet (could be for this host or could need to be forwarded)

- Send the packet to its destination handling routine (TCP or UDP reception, or possibly retransmission to another host)

6.2.5. Accepting a Packet in UDP

- Check UDP header for errors

- Match destination to socket

- Send an error message back if there is no such socket

- Put packet into appropriate socket receive queue

- Wake up any processes waiting for data from that socket

6.2.6. Accepting a Packet in TCP

- Check sequence and flags; store packet in correct space if possible

- If already received, send immediate ACK and drop packet

- Determine which socket packet belongs to

- Put packet into appropriate socket receive queue

- Wake up and processes waiting for data from that socket

6.2.7. Reading from a Socket (Part II)

- Wake up when data is ready (socket)

- Call transport layer receive function

- Move data from receive queue to user buffer (TCP/UDP)

- Return data and control to application (socket)

6.3. Linux Functions

The following is an alphabetic list of the Linux kernel functions that are most important to receiving traffic, where they are in the source code, and what they do. To follow functions calls from the network up, start with DEVICE_rx(). To follow functions calls from the application down, start with sock_read().

>>> DEVICE_rx() - device dependent, drivers/net/DEVICE.c

(gets control from interrupt)

performs status checks to make sure it should be receiving

calls dev_alloc_skb() to reserve space for packet

gets packet off of system bus

calls eth_type_trans() to determine protocol type

calls netif_rx()

updates card status

(returns from interrupt)

inet_recvmsg() - net/ipv4/af_inet.c (764)

extracts pointer to socket sock

checks socket to make sure it is accepting

verifies protocol pointer

returns sk->prot[tcp/udp]->recvmsg()

ip_rcv() - net/ipv4/ip_input.c (395)

examines packet for errors:

invalid length (too short or too long)

incorrect version (not 4)

invalid checksum

calls __skb_trim() to remove padding

defrags packet if necessary

calls ip_route_input() to route packet

examines and handle IP options

returns skb->dst->input() [= tcp_rcv,udp_rcv()]

net_bh() - net/core/dev.c (835)

(run by scheduler)

if there are packets waiting to go out, calls qdisc_run_queues()

(see sending section)

while the backlog queue is not empty

let other bottom halves run

call skb_dequeue() to get next packet

if the packet is for someone else (FASTROUTED) put onto send queue

loop through protocol lists (taps and main) to match protocol type

call pt_prev->func() [= ip_rcv()] to pass packet to appropriate

protocol

call qdisc_run_queues() to flush output (if necessary)

netif_rx() - net/core/dev.c (757)

puts time in skb->stamp

if backlog queue is too full, drops packet

else

calls skb_queue_tail() to put packet into backlog queue

marks bottom half for later execution

sock_def_readable() - net/core/sock.c (989)

calls wake_up_interruptible() to put waiting process on run queue

calls sock_wake_async() to send SIGIO to socket process

sock_queue_rcv_skb() - include/net/sock.h (857)

calls skb_queue_tail() to put packet in socket receive queue

calls sk->data_ready() [= sock_def_readable()]

>>> sock_read() - net/socket.c (366)

sets up message headers

returns sock_recvmsg() with result of read

sock_recvmsg() - net/socket.c (338)

reads socket management packet (scm) or packet by

calling sock->ops[inet]->recvmsg()

tcp_data() - net/ipv4/tcp_input.c (1507)

shrinks receive queue if necessary

calls tcp_data_queue() to queue packet

calls sk->data_ready() to wake socket

tcp_data_queue() - net/ipv4/tcp_input.c (1394)

if packet is out of sequence:

if old, discards immediately

else calculates appropriate storage location

calls __skb_queue_tail() to put packet in socket receive queue

updates connection state

tcp_rcv_established() - net/ipv4/tcp_input.c (1795)

if fast path

checks all flags and header info

sends ACK

calls _skb_queue_tail() to put packet in socket receive queue

else (slow path)

if out of sequence, sends ACK and drops packet

check for FIN, SYN, RST, ACK

calls tcp_data() to queue packet

sends ACK

tcp_recvmsg() - net/ipv4/tcp.c (1149)

checks for errors

wait until there is at least one packet available

cleans up socket if connection closed

calls memcpy_toiovec() to copy payload from the socket buffer into

the user space

calls cleanup_rbuf() to release memory and send ACK if necessary

calls remove_wait_queue() to wake process (if necessary)

udp_queue_rcv_skb() - net/ipv4/udp.c (963)

calls sock_queue_rcv_skb()

updates UDP status (frees skb if queue failed)

udp_rcv() - net/ipv4/udp.c (1062)

gets UDP header, trims packet, verifies checksum (if required)

checks multicast

calls udp_v4_lookup() to match packet to socket

if no socket found, send ICMP message back, free skb, and stop

calls udp_deliver() [= udp_queue_rcv_skb()]

udp_recvmsg() - net/ipv4/udp.c (794)

calls skb_recv_datagram() to get packet from queue

calls skb_copy_datagram_iovec() to move the payload from the socket buffer

into the user space

updates the socket timestamp

fills in the source information in the message header

frees the packet memory

Chapter 7

7. IP Forwarding

This chapter presents the pure routing side (by IP forwarding) of message traffic. It provides an overview of the process, examines the layers packets travel through, details the actions of each layer, and summarizes the implementation code within the kernel.

7.1. Overview

See Figure 7.1 for an abstract diagram of the the forwarding process. (It is essentially a combination of the receiving and sending processes.)

|

Figure 7.1: IP Forwarding. |

A forwarded packet arrives with an interrupt when the system notifies the device that a message is ready. The device allocates storage space and tells the bus to put the message into that space. It then passes the packet to the link layer, which puts it on the backlog queue, marks the network flag for the next "bottom-half" run, and returns control to the current process.

When the process scheduler next runs, it sees that there are networking tasks to do and runs the network "bottom-half". This function pops packets off of the backlog queue, matches them to IP, and passes them to the receive function. The IP layer examines the packet for errors and routes it; the packet will go up to the transport layer (such as TCP or UDP if it is for this host) or sideways to the IP forwarding function. Within the forwarding function, IP checks the packet and sends an ICMP message back to the sender if anything is wrong. It then copies the packet into a new buffer and fragments it if necessary.

Finally the IP layer passes the packet to the link layer function, which moves the packet onto the sending device's xmit queue and makes sure the device knows that it has traffic to send. Finally, the device (such as a network card) tells the bus to send the packet.

7.2. IP Forward Walk-Through

7.2.1. Receiving a Packet

- Wake up the receiving device (interrupt)

- Test the medium (device)

- Receive the link header

- Allocate space for the packet

- Tell the bus to put the packet into the buffer

- Put the packet on the backlog queue

- Set the flag to run the network bottom half when possible

- Return control to the current process

7.2.2. Running the Network "Bottom Half"

- Run the network bottom half (scheduler)

- Send any packets that are waiting to prevent interrupts (net_bh)

- Loop through all packets in the backlog queue and pass the packet up to its Internet reception protocol - IP

- Flush the sending queue again

- Exit the bottom half

7.2.3. Examining a Packet in IP

- Check packet for errors - too short? too long? invalid version? checksum error?

- Defragment the packet if necessary

- Get the route for the packet (could be for this host or could need to be forwarded)

- Send the packet to its destination handling routine (retransmission to another host in this case)

7.2.4. Forwarding a Packet in IP

- Check TTL field (and decrement it)

- Check packet for improper (undesired) routing

- Send ICMP back to sender if there are any problems

- Copy packet into new buffer and free old one

- Set any IP options

- Fragment packet if it is too big for new destination

- Send the packet to the destination route's device output function

7.2.5. Transmitting a Packet

- Put the packet on the device output queue

- Wake up the device

- Wait for the scheduler to run the device driver

- Test the medium (device)

- Send the link header

- Tell the bus to transmit the packet over the medium

7.3. Linux Functions

The following is an alphabetic list of the Linux kernel functions that are most important to IP forwarding, where they are in the source code, and what they do. To follow the functions calls, start with DEVICE_rx().

dev_queue_xmit() - net/core/dev.c (579)

calls start_bh_atomic()

if device has a queue

calls enqueue() to add packet to queue

calls qdisc_wakeup() [= qdisc_restart()] to wake device

else calls hard_start_xmit()

calls end_bh_atomic()

DEVICE->hard_start_xmit() - device dependent, drivers/net/DEVICE.c

tests to see if medium is open

sends header

tells bus to send packet

updates status

>>> DEVICE_rx() - device dependent, drivers/net/DEVICE.c

(gets control from interrupt)

performs status checks to make sure it should be receiving

calls dev_alloc_skb() to reserve space for packet

gets packet off of system bus

calls eth_type_trans() to determine protocol type

calls netif_rx()

updates card status

(returns from interrupt)

ip_finish_output() - include/net/ip.h (140)

sets sending device to output device for given route

calls output function for destination [= dev_queue_xmit()]

ip_forward() - net/ipv4/ip_forward.c (72)

checks for router alert

if packet is not meant for any host, drops it

if TTL has expired, drops packet and sends ICMP message back

if strict route cannot be followed, drops packet and sends ICMP

message back to sender

if necessary, sends ICMP message telling sender packet is redirected

copies and releases old packet

decrements TTL

if there are options, calls ip_forward_options() to set them

calls ip_send()

ip_rcv() - net/ipv4/ip_input.c (395)

examines packet for errors:

invalid length (too short or too long)

incorrect version (not 4)

invalid checksum

calls __skb_trim() to remove padding

defrags packet if necessary

calls ip_route_input() to route packet

examines and handle IP options

returns skb->dst->input() [= ip_forward()]

ip_route_input() - net/ipv4/route.c (1366)

calls rt_hash_code() to get index for routing table

loops through routing table (starting at hash) to find match for packet

if it finds match:

updates stats for route (time and usage)

sets packet destination to routing table entry

returns success

else

checks for multicasting addresses

returns result of ip_route_input_slow() (attempted routing)

ip_route_output_slow() - net/ipv4/route.c (1421)

if source address is known, looks up output device

if destination address is unknown, set up loopback

calls fib_lookup() to find route

allocates memory new routing table entry

initializes table entry with source, destination, TOS, output device,

flags

calls rt_set_nexthop() to find next destination

returns rt_intern_hash(), which installs route in routing table

ip_send() - include/net/ip.h (162)

calls ip_fragment() if packet is too big for device

calls ip_finish_output()

net_bh() - net/core/dev.c (835)

(run by scheduler)

if there are packets waiting to go out, calls qdisc_run_queues()

(see sending section)

while the backlog queue is not empty

let other bottom halves run

call skb_dequeue() to get next packet

if the packet is for someone else (FASTROUTED) put onto send queue

loop through protocol lists (taps and main) to match protocol type

call pt_prev->func() [= ip_rcv()] to pass packet to appropriate

protocol

call qdisc_run_queues() to flush output (if necessary)

netif_rx() - net/core/dev.c (757)

puts time in skb->stamp

if backlog queue is too full, drops packet

else

calls skb_queue_tail() to put packet into backlog queue

marks bottom half for later execution

qdisc_restart() - net/sched/sch_generic.c (50)

pops packet off queue

calls dev->hard_start_xmit()

updates status

if there was an error, requeues packet

rt_intern_hash() - net/ipv4/route.c (526)

puts new route in routing table

Chapter 8

8. Basic Internet Protocol Routing

This chapter presents the basics of IP Routing. It provides an overview of how routing works, examines how routing tables are established and updated, and summarizes the implementation code within the kernel.

8.1. Overview

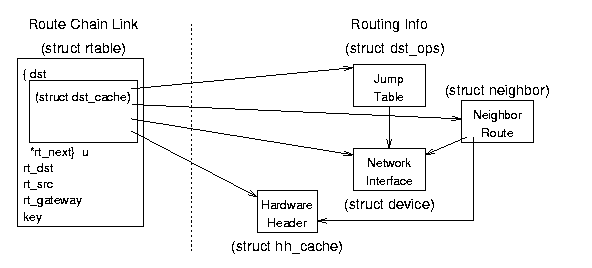

Linux maintains three sets of routing data - one for computers that are directly connected to the host (via a LAN, for example) and two for computers that are only indirectly connected (via IP networking). Examine Figure 8.1 to see how entries for a computer in the general example might look.

|

Figure 8.1: General Routing Table Example. |

The neighbor table contains address information for computers that are physically connected to the host (hence the name "neighbor"). It includes information on which device connects to which neighbor and what protocols to use in exchanging data. Linux uses the Address Resolution Protocol (ARP) to maintain and update this table; it is dynamic in that entries are added when needed but eventually disappear if not used again within a certain time. (However, administrators can set up entries to be permanent if doing so makes sense.)

Linux uses two complex sets of routing tables to maintain IP addresses: an all-purpose Forwarding Information Base (FIB) with directions to every possible address, and a smaller (and faster) routing cache with data on frequently used routes. When an IP packet needs to go to a distant host, the IP layer first checks the routing cache for an entry with the appropriate source, destination, and type of service. If there is such an entry, IP uses it. If not, IP requests the routing information from the more complete (but slower) FIB, builds a new cache entry with that data, and then uses the new entry. While the FIB entries are semi-permanent (they usually change only when routers come up or go down) the cache entries remain only until they become obsolete (they are unused for a "long" period).

8.2. Routing Tables

Note: within these tables, there are references to variables of types such as u32 (host byte order) and __u32 (network byte order). On the Intel architecture they are both equivalent to unsigned ints and in point of fact they are translated (using the ntohl function) anyway; the type merely gives an indication of the order in which the value it contains is stored.

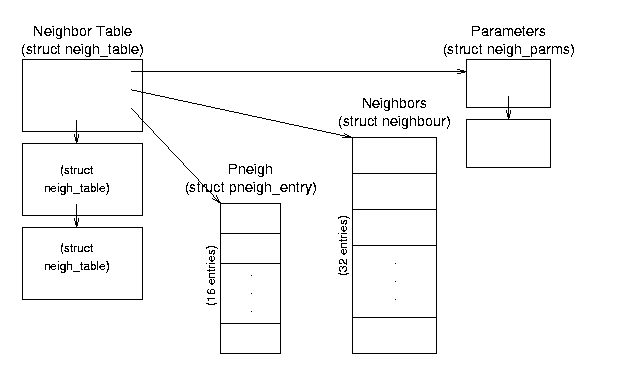

8.2.1. The Neighbor Table

The Neighbor Table (whose structure is shown in Figure 8.2) contains information about computers that are physically linked with the host computer. (Note that the source code uses the European spelling, "neighbour".) Entries are not (usually) persistent; this table may contain no entries (if the computer has not passed any network traffic recently) or may contain as many entries as there are computers physically connected to its network (if it has communicated with all of them recently). Entries in the table are actually other table structures which contain addressing, device, protocol, and statistical information.

|

Figure 8.2: Neighbor Table Data Structure Relationships. |

struct neigh_table *neigh_tables - this global variable is a pointer to a list of neighbor tables; each table contains a set of general functions and data and a hash table of specific information about a set of neighbors. This is a very detailed, low level table containing specific information such as the approximate transit time for messages, queue sizes, device pointers, and pointers to device functions.

Neighbor Table (struct neigh_table) Structure - this structure (a list element) contains common neighbor information and table of neighbor data and pneigh data. All computers connected through a single type of connection (such as a single Ethernet card) will be in the same table.

struct neigh_table *next - pointer to the next table in the list.

struct neigh_parms parms - structure containing message travel time, queue length, and statistical information; this is actually the head of a list.

struct neigh_parms *parms_list - pointer to a list of information structures.

struct neighbour *hash_buckets[] - hash table of neighbors associated with this table; there are NEIGH_HASHMASK+1 (32) buckets.

struct pneigh_entry *phash_buckets[] - hash table of structures containing device pointers and keys; there are PNEIGH_HASHMASK+1 (16) buckets.

- Other fields include timer information, function pointers, locks, and statistics.

Neighbor Data (struct neighbour) Structure - these structures contain the specific information about each neighbor.

struct device *dev - pointer to the device that is connected to this neighbor.

__u8 nud_state - status flags; values can be incomplete, reachable, stale, etc.; also contains state information for permanence and ARP use.

struct hh_cache *hh - pointer to cached hardware header for transmissions to this neighbor.

struct sk_buff_head arp_queue - pointer to ARP packets for this neighbor.

- Other fields include list pointers, function (table) pointers, and statistical information.

8.2.2. The Forwarding Information Base

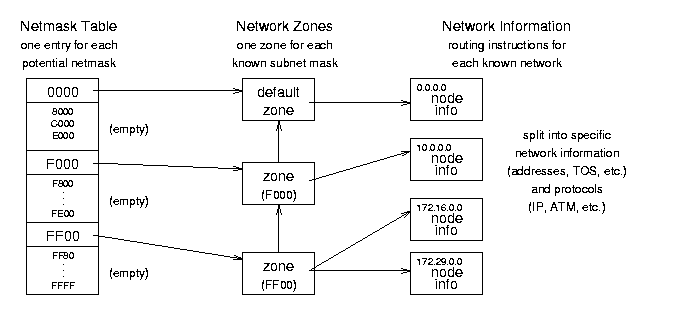

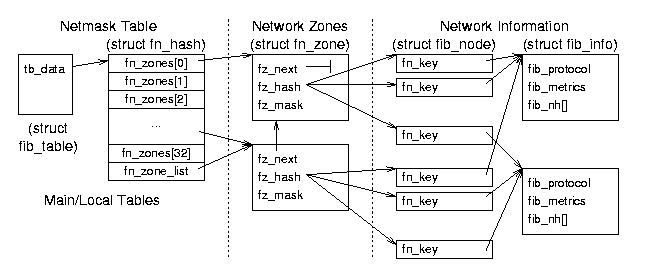

|

Figure 8.3: Forwarding Information Base (FIB) conceptual organization. |

The Forwarding Information Base (FIB) is the most important routing structure in the kernel; it is a complex structure that contains the routing information needed to reach any valid IP address by its network mask. Essentially it is a large table with general address information at the top and very specific information at the bottom. The IP layer enters the table with the destination address of a packet and compares it to the most specific netmask to see if they match. If they do not, IP goes on to the next most general netmask and again compares the two. When it finally finds a match, IP copies the "directions" to the distant host into the routing cache and sends the packet on its way. See Figures 8.3 and 8.4 for the organization and data structures used in the FIB - note that Figure 8.3 shows some different FIB capabilities, like two sets of network information for a single zone, and so does not follow the general example.)