About Me

I've written media-related Linux drivers for consumer and embedded devices since 2001 and I'm one of the Video4Linux core developers. I've given Linux media-related talks at the FOSDEM, LPC and ELC. I currently work as an embedded Linux consultant through my company Ideas on Board where I develop a wide range of embedded drivers including DRM/KMS and V4L2.

Project Details

The Media Controller kernel API has been designed to exposing detailed information about media devices, and video capture devices in particular, to userspace. While the API is not limited to a particular type of device, it has so far been used mostly to expose the topology of complex embedded media devices to applications, allowing a fine-grained control over the device that wasn't possible through the V4L API alone.

Handling those devices in userspace isn't simple due to the hardware complexity. Careful testing of applications and userspace libraries is thus needed, especially when they aim at supporting a wide range of devices. The fact that the devices with which code needs to be tested are embedded and not always easily available to developers further increases testing issues.

A similar issue exists with the V4L API and has been addressed by a kernel driver that emulates a V4L device without requiring hardware. The goal of this project is to create a similar driver for the Media Controller API.

Resources

The Media Controller API documentation is available at http://linuxtv.org/downloads/v4l-dvb-apis/media_controller.html (built from the DocBook documentation in the kernel source tree).

- Documentation/media-framework.txt in the kernel source tree.

The Media Controller development mailing list is linux-media@vger.kernel.org.

- Media developers hang around in the #v4l IRC channel on freenode.net.

Tasks for the Application Period

Tasks must be completed in the order listed. The tasks list isn't complete yet.

Building the Media Controller Test Tool

A userspace utility named media-ctl is available as part of the v4l-utils package. In order to test the code that will be developed for this project the latest version of media-ctl will be needed. Furthermore, as changes to media-ctl might need to be performed as well, the utility should be compiled from its sources.

Clone the v4l-utils git tree. The git web overview page is available at http://git.linuxtv.org/cgit.cgi/v4l-utils.git/ and lists the git URL.

- The build system is based on autotools. Configure and build the software. This should produce a media-ctl wrapper script in the utils/media-ctl/ directory that executes the compiled binary in utils/media-ctl/.libs. You can either install the media-ctl binary locally using 'make install' or run it from the source tree using the wrapper script.

- Run the media-ctl tool without arguments. It should output a help text.

- Perform a small modification to the function that prints the help text, recompile the software, and run media-ctl to ensure your modification has been taken into account.

The purpose of this first task is not to submit a patch, but to ensure you can download, modify, compile and run the media-ctl test tool as this will be needed later during the course of the project.

Open-source development works based on trust. A proof that you have complete the first task properly isn't stricly required. If you don't complete it you will likely have problems later, so this is really a task designed to help you getting started with the project. Of course if you're not sure whether your results are correct and would like guidance, or have any other question, please ask on the outreachy-kernel mailing list and CC me.

Testing the Test Tool with a Hardware Device

This task is optional but recommended.

While no access to hardware is needed to implement a virtual Media Controller driver, understanding the API is easier done when testing on a real device. All USB cameras compatible with the USB Video Class can be used to first experiment with the Media Controller API.

The uvcvideo kernel driver implements the Media Controller API when the kernel is build with Media Controller support.

- Clone the mainline kernel git tree, configure and build it. You can use your distribution's running kernel configuration file as a default. Make sure to enable both the CONFIG_MEDIA_CONTROLLER and the CONFIG_USB_VIDEO_CLASS options (the latter can be enabled as a module).

- Install the kernel you have built. Make sure not to overwrite your distribution's kernel, but instead install the new kernel as a multi-boot option. This will allow you to reboot into a working system should the newly built kernel fail to boot properly.

- Plug a UVC-compatible camera and check that the uvcvideo module gets loaded (if you have built it as a module) and that driver prints successful device detection messages in the kernel log.

- Run the media-ctl tool with the -p option to display the full device topology in text form and study it.

- Run the media-ctl tool with the --print-dot option and redirect the output to a file named uvc.dot. Process that file using the dot utility (part of the graphviz package) with 'dot -Tps -o uvc.ps uvc.dot' to generate a graph representing the device topology in postscript form. Open the generated postscript file and study how it relates to the text form of the device topology.

Testing the Virtual V4L Driver

The Virtual V4L driver is available in the drivers/media/platform/vivid/ directory of the kernel sources.

- If the driver isn't selected in the kernel configuration, select, build and install it.

- The vivid driver isn't associated with a particular device and is thus not loaded automatically. Load it manually with modprobe.

- Make sure that a device node has been created in /dev. The device node should be named /dev/video[0-9] depending on the number of other V4L devices present.

- Use your favourite webcam application to open the device and display video. The driver will generate a colour bar test pattern by default. If you have no favourite webcam application use qv4l2 from the v4l-utils package.

Creating a Skeleton Driver

The skeleton driver will serve as the base for the virtual Media Controller driver.

Follow the Hello World kernel module example from chapter 2 of the Linux Device Drivers, Third Edition to create the skeleton driver. Note that the book has been written based on the 2.6.10 kernel, you might thus need to adapt the code to kernel API changes that have occured since then. The module should be placed in drivers/media/.

- Compile the module, load it and make sure it prints the expected Hello World message in the kernel log.

- Don't forget to commit your work in your kernel git tree.

Create a Media Controller Device in the Skeleton Driver

This task starts building the virtual Media Controller infrastructure in the skeleton driver.

- Create, initialize and register a media_device instance when the skeleton driver initializes.

- Make sure that the device is unregistered and freed when the driver is removed.

- Verify that the corresponding media device node is created, and use media-ctl to print device information.

Add Entities to the Media Controller Device

It's now time to add entities to the device to build a media graph.

- Create, initialize and register several entities with the media_device. Those entities should have variable number of pads.

Create links between the entities to build a graph. You can mimic one of the graphs available at http://www.ideasonboard.org/media/ or create your own.

- Make sure that everything is unregistered and freed correctly when the driver is removed.

- Use media-ctl to print the graph and verify that it matches what you have implemented in the driver.

Tasks for the Internship Period

The Virtual Media Controller driver is divided in two major parts.

Graph Management

The goal of the Virtual Media Controller device is to expose a graph of media entities to userspace. To make this trully useful, the graph topology needs to be configurable, ideally from userspace. Tasks for this part are

- Design a graph topology configuration data structure.

- Specify several graphs through different hardcoded topology configurations.

- Research how to pass graph topology from userspace to the driver. Possible candidates for that API are sysfs, Device Tree fragments or custom ioctls.

- Implement initial configuration of the graph topology from userspace.

- Add support for runtime entities addition and deletion to the userspace API. This could involve updates to the media controller core code.

Video Pipeline

With a virtual entities graph in place, support for virtual video sources and video processing entities would allow more elaborate usage of the driver by userspace video applications. This part extends the driver by adding virtual V4L2 video devices and sub-devices to the graph and implementing the corresponding V4L2 API.

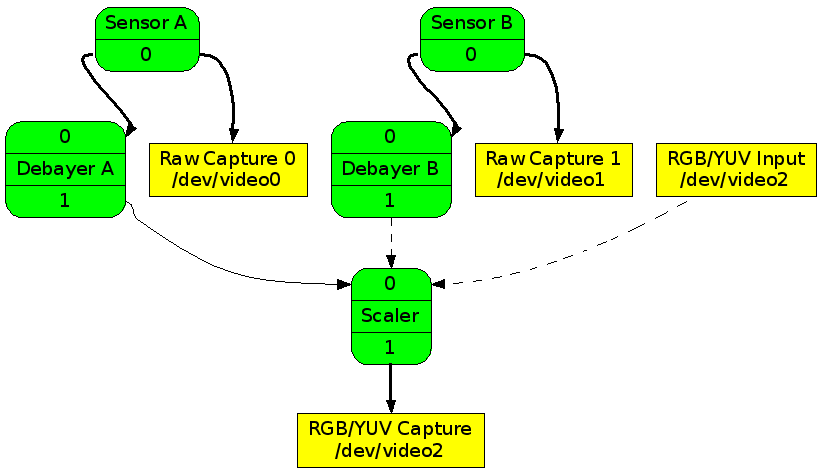

The target is to implement the following entities.

- A raw sensor subdevice, with just one Bayer format, one size and one frameinterval. Support for horizontal and vertical flip should be included since that affects the format and size.

- A debayer subdevice that take Bayer data and converts it to various RGB/YUV formats.

- A scaler subdevice that takes RGB and scales it up/down up to a factor of 4.

V4L2 video capture and output devices.

The test pattern generator and scaler code from the vivid driver should be reused to implement the sensors and scaler subdevices.

The final target is a graph containing two sensor and debayer instances, one scaler instance, three video capture instances and one video output instance as shown below.

As this could require more time than available for the internship, the internship target is a pipeline consisting of one sensor subdevice and one V4L2 video capture device.

If time permits, possible extensions that can be considered are

- Cropping and composing support for the sensors and scaler.

- Support for more mediabus codes, sizes and intervals on the sensors.

V4L2 Image Source and Image Processing controls support.

- HDMI receiver/transmitter subdevices.

- Flash subdevice.

Scope and Schedule

The two parts are mostly independent from each other. Once the graph topology configuration data structure is implemented support for dynamic graph modifications and for the video pipeline can be developed separately. As the three months internship period will likely not be long enough to implement all proposed features, you have the freedom to pick the tasks you find the most interesting (this should of course be discussed and agreed upon) and propose a time line. The main goal for the internship period is to get a driver merged (or on its way to being merged) in the mainline kernel with enough features to make it useful.

Contact Info

Email: <laurent.pinchart AT ideasonboard DOT com> IRC: pinchartl on freenode.net and irc.oftc.net