How does the kernel implements Hashtables?

It wasn't uncommon, when working with older versions of the kernel, to encounter redundant code managing classical data structures such as linked lists, hashtables, etc. Recent versions of the kernel now features a "unified" and very smart generic API to manipulate such data structures. Understanding this API can help you make sense of tidbits of kernel code here and there but is also a great opportunity to improve your C programming knowledge by reading some very interestingly put together code.

First things first, the following implementation of the hashtable relies on chaining elements upon a collision. So every bucket in the hashtable is a linked list which will hold all objects that are hashed to the same bucket. As every data structure course teaches, the idea is that the hashtable is big enough to contain all elements in different buckets, and the hash function should be good enough to distribute them uniformly, but just in case a collision does happen, the elements are chained. A good hash function should make sure you get O(1) elements into every bucket.

Let's take a look at the kernel's hashtable API from the perspective of "how would I use it in my own code?" (e.g. a Loadable Kernel Module). Let's start by defining a data structure that we will then embed in a kernel hashtable:

struct mystruct {

int data ;

} ;To be able to link each element of type struct mystruct to others, we need to add a struct list_head field:

struct mystruct {

int data ;

struct hlist_node my_hash_list ;

} ;When first encountering this, most people are confused because they have been taught to implement hashtables differently. So Why do we have a pointer to hlist_node? Why do do we need a list in the first place? First you may wish to read FAQ/LinkedLists to grasp the idea of linked lists in the kernel. The main difference between the list_node and hlist_node structs is the fact that list_node is a cyclic linked list, while hlist_node is terminated by a null pointer.

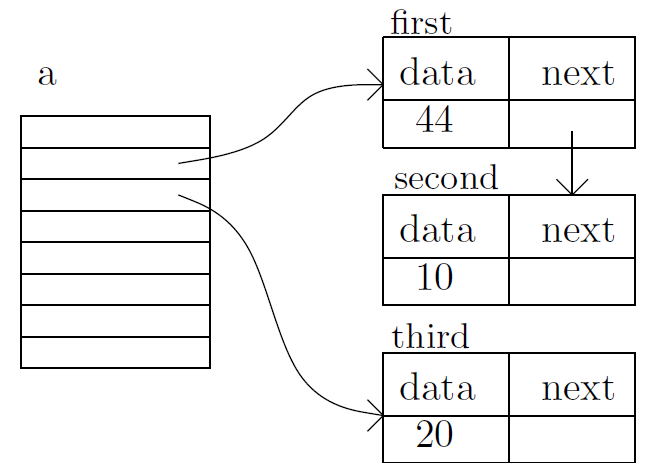

The hashtable is an array of struct hlist_head pointers, where each one points to a different list, and each one of those lists holds all elements that are hashed to the same bucket. So every element is essentially part of a hlist and the hashtable only holds the head of these lists.

Back to our example, let's create our first variable representing an element that will be hashed:

struct mystruct first = {

.data = 10,

.my_hash_list = 0 /* Will be initilaized when added to the hashtable */

} ;Now we have an element that can be added to a hashtable, so lets define a hashtable.

15 #define DEFINE_HASHTABLE(name, bits) \

16 struct hlist_head name[1 << (bits)] = \

17 { [0 ... ((1 << (bits)) - 1)] = HLIST_HEAD_INIT }Notice that we use the number of bits to define a hashtable and not the size, so the following declaration will have 8 buckets.

DEFINE_HASHTABLE(a, 3);

Now we have a hashtable called a with 8 buckets each consisting of a list (pointing to NULL), and an element that we want to add to the hashtable. But first we need to decide about a key for the element. In this example we simply use the data as a hash key, note that this is NOT the bucket it's going into.

57 58 /** 59 * hash_add_rcu - add an object to a rcu enabled hashtable 60 * @hashtable: hashtable to add to 61 * @node: the &struct hlist_node of the object to be added 62 * @key: the key of the object to be added 63 */ 64 #define hash_add_rcu(hashtable, node, key) \ 65 hlist_add_head_rcu(node, &hashtable[hash_min(key, HASH_BITS(hashtable))]) 66

As you can see the hash_min macro decide into which bucket the element will go. It is using golden ratio constants to calculate, deterministically, a random number from any key. You don't need to worry about the size or randomness of the key.

Let's add the first element to the hashtable:

hash_add(a, &first.next, first.data);

This will add the element first to the hashtable, notice that our hashtable has only 8 buckets but the key is 10. This is fine since the hash_min macro takes care of the size and randomness.

Let's add another two elements:

struct mystruct second = {

.data = 20,

.my_hash_list = 0 /* Will be initilaized when added to the hashtable */

} ;

struct mystruct third = {

.data = 44,

.my_hash_list = 0 /* Will be initilaized when added to the hashtable */

} ;

hash_add(a, &second.next, second.data);

hash_add(a, &third .next, third .data);Now we have two elements in the hashtable, and we can work with them. For iterating over all elements we can use the hash_for_each macro which translates to a for loop that loops lists in all buckets.

114 /** 115 * hash_for_each - iterate over a hashtable 116 * @name: hashtable to iterate 117 * @bkt: integer to use as bucket loop cursor 118 * @obj: the type * to use as a loop cursor for each entry 119 * @member: the name of the hlist_node within the struct 120 */ 121 #define hash_for_each(name, bkt, obj, member) \ 122 for ((bkt) = 0, obj = NULL; obj == NULL && (bkt) < HASH_SIZE(name);\ 123 (bkt)++)\ 124 hlist_for_each_entry(obj, &name[bkt], member)

The most inner macro hlist_for_each_entry is implemented in the hlist file and simply expands to another for loop which iterates over all members of the list. The outer for loop iterates over all buckets in the hashtable (all indices of the array). The hlist_for_each_entry macro can be confusing, so you may want to read the second half of FAQ/LinkedLists to get an idea of how it is working. In short you need to define a pointer obj to work with while iterating and an integer bkt that will tell you which bucket you are now working with (probably the bkt will be useless in most cases).

Back to our example, say we want to print all elements of the list.

int bkt;

struct mystruct * current;

hash_for_each_entry(a, bkt, current, next){

printk(KERN_INFO "data=%d is in bucket %d\n", current->data, bkt);

}Now we can see, on a 64 bit machine, that first and third have been hashed to bucket number 1 and second has been hashed to bucket number 2.

See attachment for a visual representation of what we have in memory.